Preparation

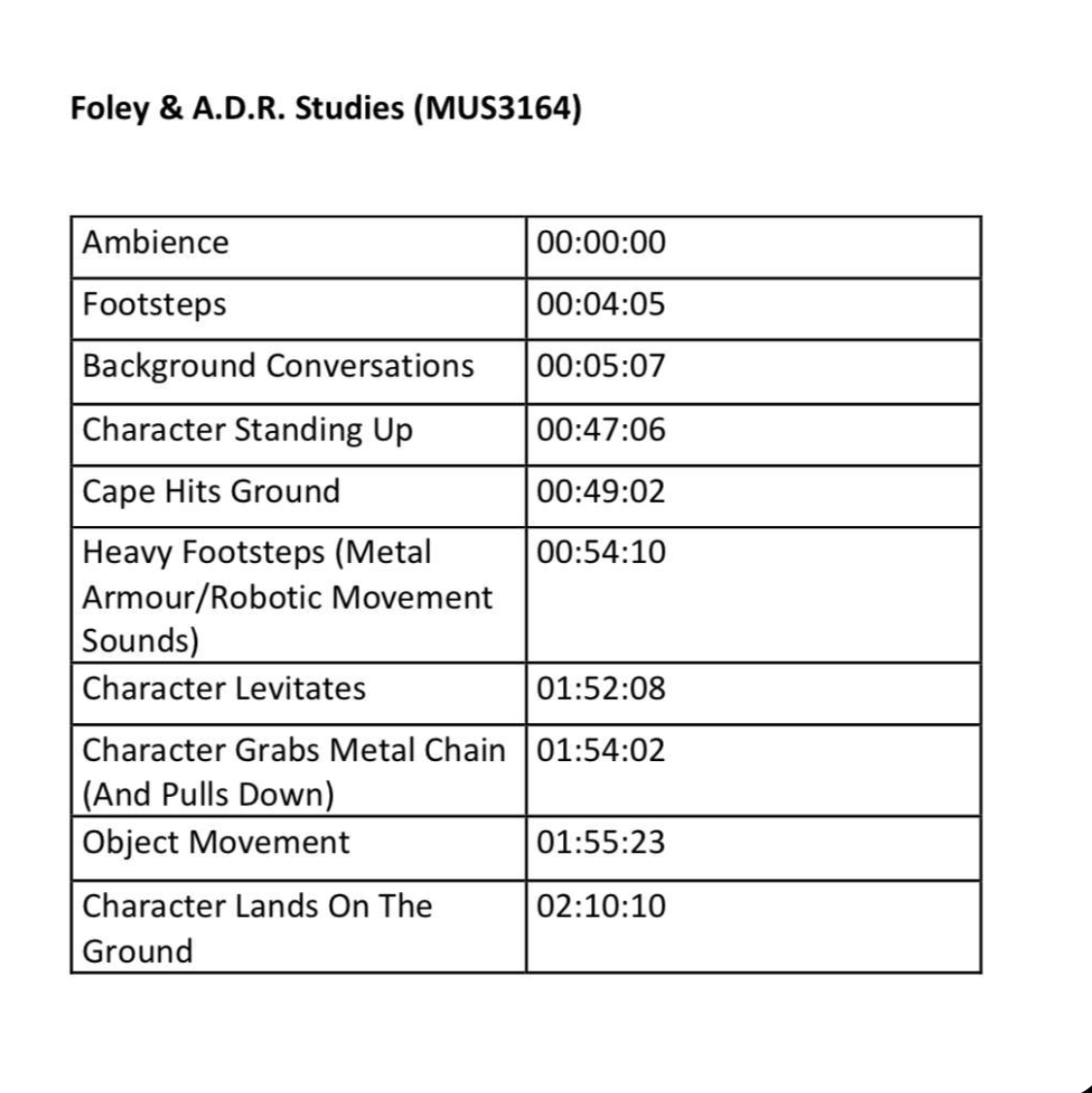

The first step in preparation was creating a table consisting of all the sounds we had to do for the clip as well as the time stamps for where these sounds begin. Below is a copy of this table. For this assignment myself, George Fetton Ben Jackson and Patricio decided to do a clip from Avengers Age of Ultron, in particular, when the Maximoff twins meet Ultron for the first time. For this clip, we needed to create sounds for the ambience, footsteps, background conversations, Ultron standing up, a cape hitting the ground, heavy footsteps, Ultron levitating, Ultron grabbing a chain making an object move and Ultron landing on the ground as listed below. We went through the clip identifying what sounds needed in order to create the best soundscape possible and made a list containing the time stamps for these sounds.

After we identified what sounds were needed for the scene, we decided it would be useful to distribute the workload between each member of the group due to differing schedules and distance travelling being difficult. I was designated the role to produce the sounds for the cape hitting the ground, footsteps and the chain being pulled making the object move. I then began planning how I would recreate these sounds to the best of my ability and with the equipment I had at home. For the steps, I would record myself stepping in one spot and for the cape I would use a towel and wave it the same as Ultron does on screen. The sound I struggled with to think of how I would recreate it was the object moving which I ended up being able to record by simply knocking a piece of wood with my knuckles.

Recording

I first began recording the footsteps for the scene in which the Maximoff twins walk into Ultron’s lair. In this section, the ground is gravelly and course and so I decided to find a piece of ground that is similar to the path in the scene. I found a small section of ground that was concrete paving covered in debris of little stones and sand like material. I then used my Audio Technica at 2020 condenser microphone connected to a logic pro X file. I used this mic because it is extremely sensitive and so records very quiet movements which is needed when recording low sounds such as walking. It does pose the issue, however, of picking up background noise which I resolved through using wooden slabs to block the wind and other external sounds. I recorded myself stepping in time with the characters by playing the clip as I recorded. Upon first recording, I noticed that the sound was not similar enough to the original clip, the characters seemed to step and slide their feet on the gravel as opposed to just stepping naturally. I re-recorded the clips twice using this technique of stepping and then flicking the end of my foot to create this sound. I recorded multiple of these in order to layer them and create different sounds for each footstep to make it as natural as possible.

For the sound of the cape hitting the ground, I split the sound into two sections. The first being the Ultron spinning to face the Maximoff twins and the cape blowing in the air with the speed and then the second being the sound of the cape landing on the ground. I decided to record these separately, again using my Audio Technica At 2020. I tried multiple different items in order to recreate the sound of the cape waving, the first being a barbering cape as I believed that this was the correct material that is the most similar to the cape used by Ultron. However, when I attempted to record this, the cape was too light and made a crinkling sound that was unsuitable for the sound as not only was it not loud enough, but it was also the wrong texture of sound. I then decided to use a jumper, but this was too thick and did not create a loud or similar enough sound. I finally decided to use a bath towel which created the perfect tone and texture, being long enough to generate a nice, loud but soft sound that flicked at the end which was perfect for the recreation. For the second section of the sound, in being the sound of the cape hitting the floor, I decided to record the same towel dropping onto the floor and, with some slight editing, this worked perfectly.

The object moving, as previously stated, was rather difficult to reproduce. Due to its deep metallic sound and slow rhythm, there were very few things that could recreate the sound accurately other than a machine itself. To combat this, I tried knocking on different surfaces to get a similar sound and tone to that of a chain moving and the rhythmic ticking of said machinery. I settled on knocking the wood of a windowsill multiple times, again using the Audio Technica At 2020. I then stretched the audio using logic pro x and pitched shifted the audio down by an octave. This gave the sound of a slow melodic clicking noise which was perfect for this scene.

Another section of the recording that I was involved in was the dialogue of Wonda Maximoff. Luckily, I was able to travel into the studio in order to record this which made the process easier. For this, we used an Audio Technica AT 897 mini boom microphone which set in the middle of three partitions which helped block out external noise and using a pop shield to help with sibilance and we used the Avid C24 controller in order to make recording and editing on pro tools smoother. We then each had a script to read from which helped us remember lines and had the original scene playing in the background on the pro tools file to help keep us in time and relay the words exactly as the characters did. Unfortunately, when we came back to this file after saving and closing the pro tool’s session properly, this audio was lost, and I was not there to re-record the scene again. Because of this, Ezz Dudley stepped in and recorded Wonda’s part on my behalf.

The other sounds recorded by the other members of the group individually were:

- Character standing up

- Heavy footsteps – Ultron walking

- Character landing

- Background chatting and ambience

- Character levitates

The character standing up, done by Ben Jackson, consisted of dropping a heavy blanket on the floor, the heavy footsteps recorded by George Fitton were created by knocking two weights together in unison with the character and the character landing was done by dropping these weights onto a desk. For the ambience and background chatter, Ben, Patricio and George went into Ormskirk town centre and recorded the natural sounds of the area. For the character, in being Ultron, levitating, we decided to drag the metal weight across a metal bar. Later in editing, Ben stretched the audio to make it deeper and longer and fit more accurately with the scene and for it to sound almost jet pack like. For all these recordings, an Audio Technica AT 897 shotgun microphone was used with a windscreen cover over the top. There are many advantages to a shotgun microphone such as, a narrow pickup pattern meaning they record a very crisp and accurate sound with minimal background interruption, clear sound as well as being able to record from far away meaning it is less intrusive when recording sounds.

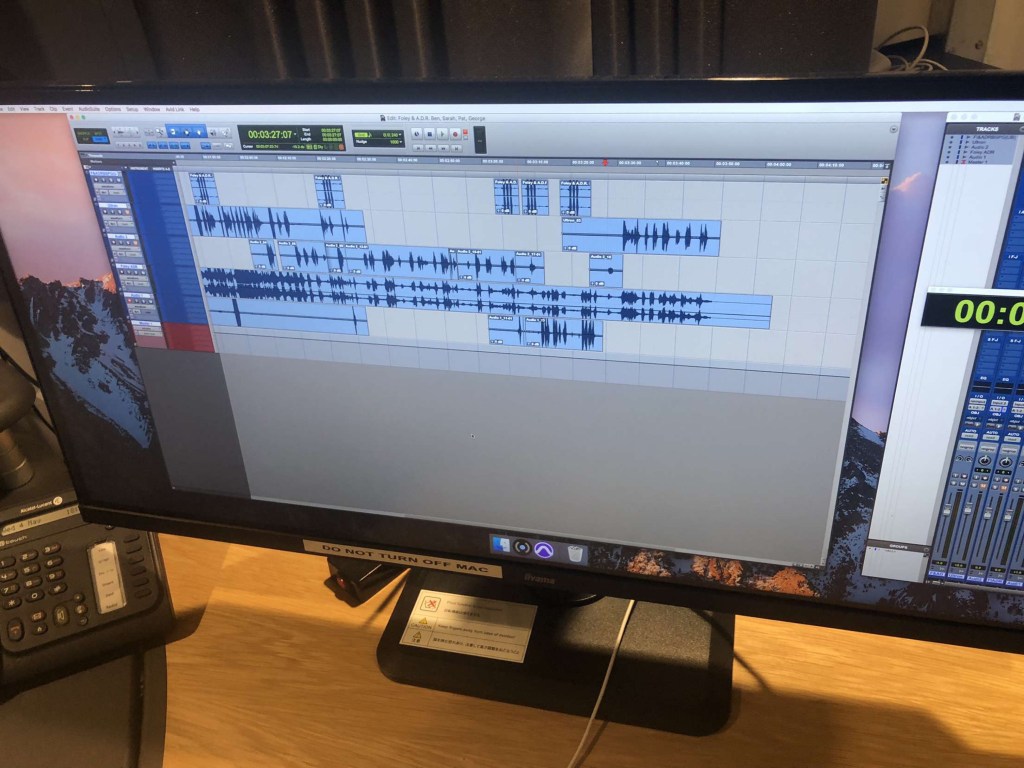

Ben Jackson oversaw the editing and synchronisation of the piece, ensuring that the levels of each of the sounds were similar and not overpowering, clipping or inaudible in anyway. He also synchronised all the audio so it was in exactly the right place for each sound, so nothing was out of place and faded the ends and beginnings of all the audio for seamless transitions.

Conclusion

I believe that, given the fact that it was a difficult scene to recreate, especially with travelling and differing schedules, that we collectively created a recreation that fit with the scene perfectly. We created a clip free and seamless audio that matched the scene incredibly well. The biggest challenged faced throughout the entire process was not the project itself, but simply the differing schedules of the individuals in the group. This made it difficult for all members to be at one place at the same time. To control this and minimise the impact, we would either FaceTime the individual who could not make it to the sessions or have a call after the session was over to fill the members of the group in about what they missed and ask for input on what we should do in the next session. Another thing that set up back slightly in time was the missing audio from the dialogue. This resulted in us having to completely re-record everything we did in the previous sessions, however, despite this we were able to record it all with plenty of time spare.